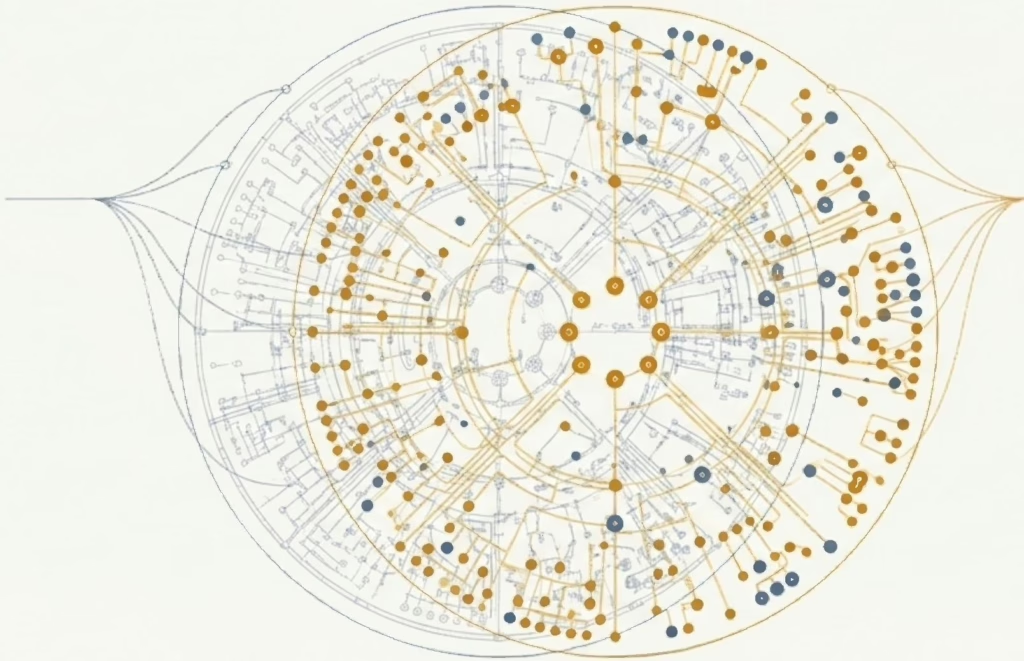

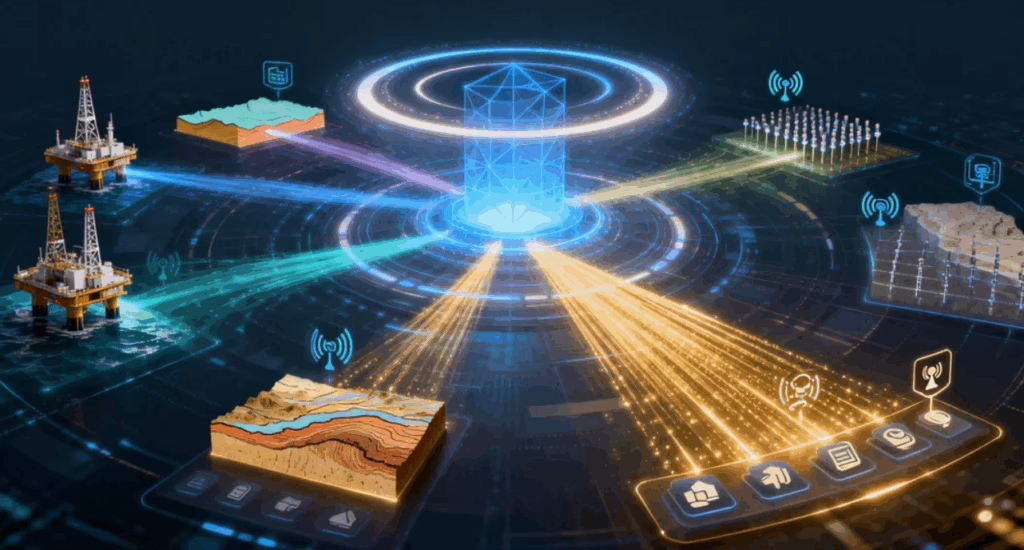

JuraData features a built-in comprehensive methodology for business modeling and standard modeling, supporting the systematic analysis and standardized modeling of data spanning over 16,000 business nodes in the oil and gas industry.

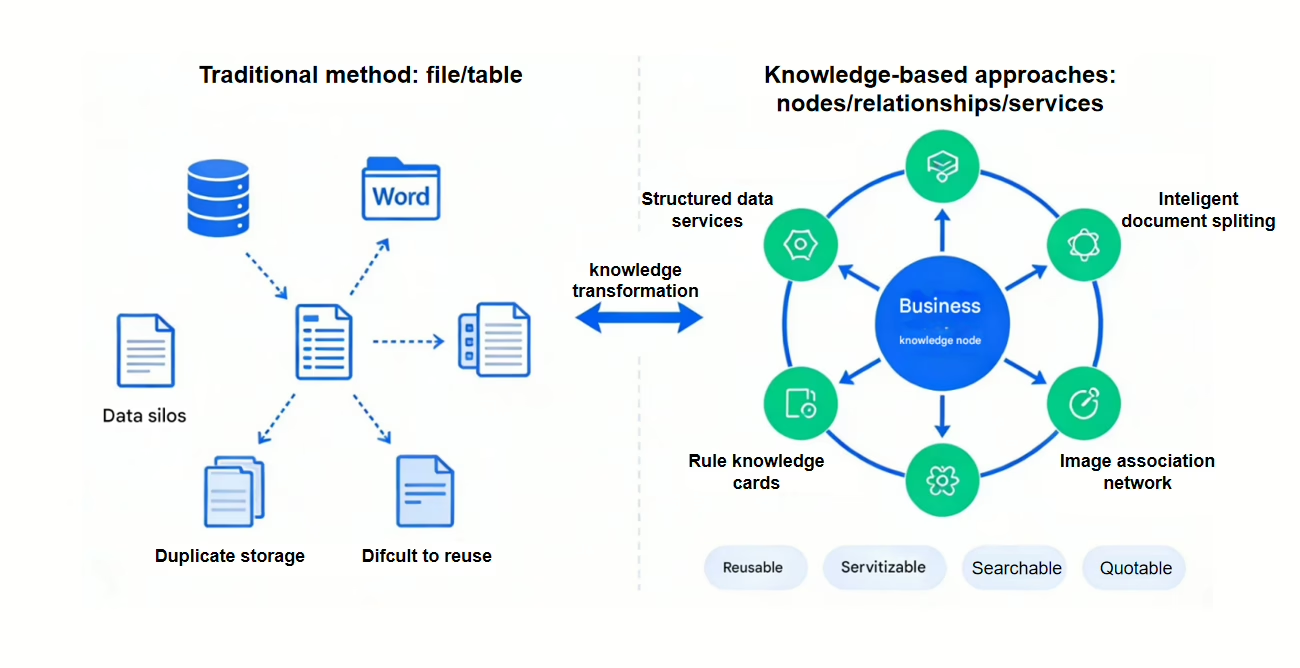

The platform offers extensive data coverage, including diverse professional data types such as structured, unstructured, logging, seismic, and graphical data, and is fully compatible with the OSDU standard system.